To use all functions of this page, please activate cookies in your browser.

my.chemeurope.com

With an accout for my.chemeurope.com you can always see everything at a glance – and you can configure your own website and individual newsletter.

- My watch list

- My saved searches

- My saved topics

- My newsletter

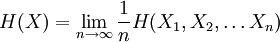

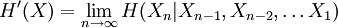

Entropy rateThe entropy rate of a stochastic process is, informally, the time density of the average information in a stochastic process. For stochastic processes with a countable index, the entropy rate H(X) is the limit of the joint entropy of n members of the process Xk divided by n, as n tends to infinity: Product highlightwhen the limit exists. An alternative, related quantity is: For strongly stationary stochastic processes, H(X) = H'(X). Entropy Rates for Markov ChainsSince a stochastic process defined by a Markov chain which is irreducible and aperiodic has a stationary distribution, the entropy rate is independent of the initial distribution. For example, for such a Markov chain Yk defined on a countable number of states, given the transition matrix Pij, H(Y) is given by:

where μi is the stationary distribution of the chain. A simple consequence of this definition is that the entropy rate of an i.i.d. stochastic process has an entropy rate that is the same as the entropy of any individual member of the process. References

|

||||||

| This article is licensed under the GNU Free Documentation License. It uses material from the Wikipedia article "Entropy_rate". A list of authors is available in Wikipedia. |