To use all functions of this page, please activate cookies in your browser.

my.chemeurope.com

With an accout for my.chemeurope.com you can always see everything at a glance – and you can configure your own website and individual newsletter.

- My watch list

- My saved searches

- My saved topics

- My newsletter

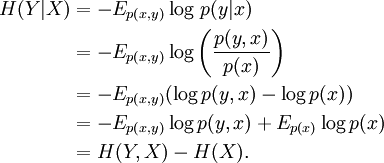

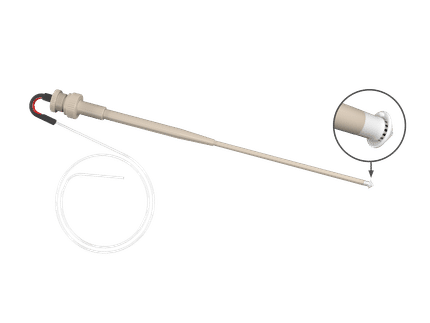

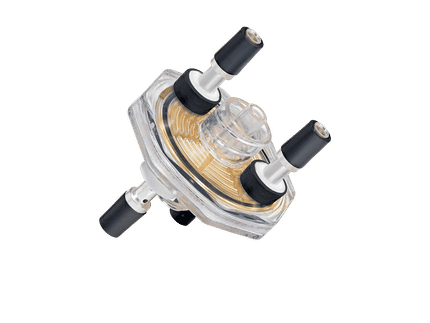

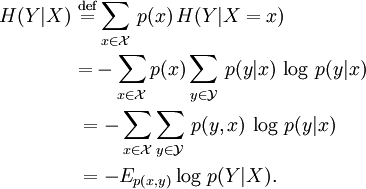

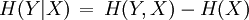

Conditional entropyIn information theory, the conditional entropy (or equivocation) quantifies the remaining entropy (i.e. uncertainty) of a random variable Y given that the value of a second random variable X is known. It is referred to as the entropy of Y conditional on X, and is written H(Y | X). Like other entropies, the conditional entropy is measured in bits, nats, or hartleys. Product highlightGiven discrete random variable X with support From this definition and Bayes' theorem, the chain rule for conditional entropy is

This is true because

Intuitively, the combined system contains H(X,Y) bits of information: we need H(X,Y) bits of information to reconstruct its exact state. If we learn the value of X, we have gained H(X) bits of information, and the system has H(Y | X) bits remaining of uncertainty. H(Y | X) = 0 if and only if the value of Y is completely determined by the value of X. Conversely, H(Y | X) = H(Y) if and only if Y and X are independent random variables. In quantum information theory, the conditional entropy is generalized to the conditional quantum entropy. References

|

| This article is licensed under the GNU Free Documentation License. It uses material from the Wikipedia article "Conditional_entropy". A list of authors is available in Wikipedia. |

and

and  , the conditional entropy of

, the conditional entropy of

.

.