To use all functions of this page, please activate cookies in your browser.

my.chemeurope.com

With an accout for my.chemeurope.com you can always see everything at a glance – and you can configure your own website and individual newsletter.

- My watch list

- My saved searches

- My saved topics

- My newsletter

Fermi–Dirac statistics

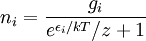

In statistical mechanics, Fermi-Dirac statistics is a particular case of particle statistics developed by Enrico Fermi and Paul Dirac that determines the statistical distribution of fermions over the energy states for a system in thermal equilibrium. In other words, it is a probability of a given energy level to be occupied by a fermion. More generally, Fermi-Dirac statistics means that the total wavefunction of fermions must be antisymmetric under an exchange of every pair of fermions (that is, if one exchanges any fermion with another, the wavefunction gets an overall minus sign). Fermions are particles which are indistinguishable and obey the Pauli exclusion principle, i.e., no more than one particle may occupy the same quantum state at the same time. Fermions have half-integral spin. Statistical thermodynamics is used to describe the behaviour of large numbers of particles. A collection of non-interacting fermions is called a Fermi gas. F-D statistics was introduced in 1926 by Enrico Fermi and Paul Dirac and applied in 1926 by Ralph Fowler to describe the collapse of a star to a white dwarf and in 1927 by Arnold Sommerfeld to electrons in metals. Pascual Jordan developed in 1925 the same statistics which he called Pauli statistics. The problem was that his referee Max Born forgot the paper for six months before finding it again. In the meantime it was independently discovered by Enrico Fermi and Paul Dirac.[1] For F-D statistics, the expected number of particles in states with energy εi is where:

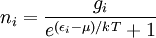

In the case where μ is the Fermi energy

Product highlight

Which distribution to useFermi-Dirac and Bose-Einstein statistics apply when quantum effects have to be taken into account and the particles are considered "indistinguishable". The quantum effects appear if the concentration of particles (N/V) ≥ nq (where nq is the quantum concentration). The quantum concentration is when the interparticle distance is equal to the thermal de Broglie wavelength i.e. when the wavefunctions of the particles are touching but not overlapping. As the quantum concentration depends on temperature; high temperatures will put most systems in the classical limit unless they have a very high density e.g. a White dwarf. Fermi-Dirac statistics apply to fermions (particles that obey the Pauli exclusion principle), Bose-Einstein statistics apply to bosons. Both Fermi-Dirac and Bose-Einstein become Maxwell-Boltzmann statistics at high temperatures or low concentrations. Maxwell-Boltzmann statistics are often described as the statistics of "distinguishable" classical particles. In other words the configuration of particle A in state 1 and particle B in state 2 is different from the case where particle B is in state 1 and particle A is in state 2. When this idea is carried out fully, it yields the proper (Boltzmann) distribution of particles in the energy states, but yields non-physical results for the entropy, as embodied in Gibbs paradox. These problems disappear when it is realized that all particles are in fact indistinguishable. Both of these distributions approach the Maxwell-Boltzmann distribution in the limit of high temperature and low density, without the need for any ad hoc assumptions. Maxwell-Boltzmann statistics are particularly useful for studying gases. Fermi-Dirac statistics are most often used for the study of electrons in solids. As such, they form the basis of semiconductor device theory and electronics. A derivation

Consider a single-particle state of a multiparticle system, whose energy is where

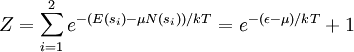

In the present context, we take our system to be a fixed single-particle state (not a particle). So our system has energy For fermions, a state can only be either occupied by a single particle or unoccupied. Therefore our system has multiplicity two: occupied by one particle, or unoccupied, called s1 and s2 respectively. We see that

For a grand canonical ensemble, probability of a system being in the microstate sα is given by

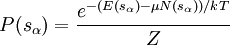

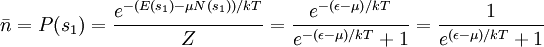

Our state being occupied by a particle means the system is in microstate s1, whose probability is

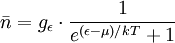

Note that if the energy level ε has degeneracy

This number is then the expected number of particles in the totality of the states with energy ε. For all temperature T, In the limit

It may be of interest here to note that, in general the chemical potential is temperature-dependent. However, for systems well below the Fermi temperature Another derivationIn the previous derivation, we have made use of the grand partition function (or Gibbs sum over states). Equivalently, the same result can be achieved by directly analyzing the multiplicities of the system. Suppose there are two fermions placed in a system with four energy levels. There are six possible arrangements of such a system, which are shown in the diagram below. ε1 ε2 ε3 ε4 A * * B * * C * * D * * E * * F * * Each of these arrangements is called a microstate of the system. Assume that, at thermal equilibrium, each of these microstates will be equally likely, subject to the constraints that there be a fixed total energy and a fixed number of particles. Depending on the values of the energy for each state, it may be that total energy for some of these six combinations is the same as others. Indeed, if we assume that the energies are multiples of some fixed value ε, the energies of each of the microstates become:

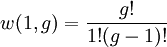

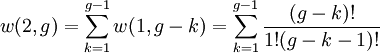

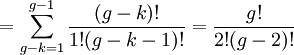

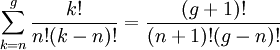

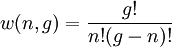

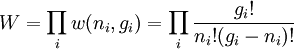

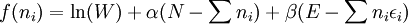

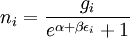

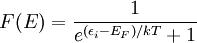

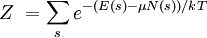

So if we know that the system has an energy of 5ε, we can conclude that it will be equally likely that it is in state C or state D. Note that if the particles were distinguishable (the classical case), there would be twelve microstates altogether, rather than six. Now suppose we have a number of energy levels, labeled by index i, each level having energy εi and containing a total of ni particles. Suppose each level contains gi distinct sublevels, all of which have the same energy, and which are distinguishable. For example, two particles may have different momenta, in which case they are distinguishable from each other, yet they can still have the same energy. The value of gi associated with level i is called the "degeneracy" of that energy level. The Pauli exclusion principle states that only one fermion can occupy any such sublevel. Let w(n, g) be the number of ways of distributing n particles among the g sublevels of an energy level. It's clear that there are g ways of putting one particle into a level with g sublevels, so that w(1, g) = g which we will write as: We can distribute 2 particles in g sublevels by putting one in the first sublevel and then distributing the remaining (n − 1) particles in the remaining (g − 1) sublevels, or we could put one in the second sublevel and then distribute the remaining (n − 1) particles in the remaining (g − 2) sublevels, etc. so that w'(2, g) = w(1, g − 1) + w(1,g − 2) + ... + w(1, 1) or where we have used the following theorem involving binomial coefficients: Continuing this process, we can see that w(n, g) is just a binomial coefficient The number of ways that a set of occupation numbers ni can be realized is the product of the ways that each individual energy level can be populated: Following the same procedure used in deriving the Maxwell-Boltzmann statistics, we wish to find the set of ni for which W is maximized, subject to the constraint that there be a fixed number of particles, and a fixed energy. We constrain our solution using Lagrange multipliers forming the function: Again, using Stirling's approximation for the factorials and taking the derivative with respect to ni, and setting the result to zero and solving for ni yields the Fermi-Dirac population numbers: It can be shown thermodynamically that β = 1/kT where k is Boltzmann's constant and T is the temperature, and that α = -μ/kT where μ is the chemical potential, so that finally: Note that the above formula is sometimes written: where z = exp(μ / kT) is the fugacity. References

Carter, Ashley H., "Classical ans Statistical Thermodynamics", Prentice-Hall, Inc., 2001, New Jersey. Griffiths, David J., "Introduction to Quantum Mechanics", 2nd ed. Pearson Education, Inc., 2005. Charles Kittel and Herbert Kroemer, Thermal Physics, 2nd ed. (W.H. Freeman, 1980) C. J. Pethick and H. Smith, "Bose-Einstein Condensation in Dilute Gases", University Press, 2002, Cambridge. See also

Categories: Statistical mechanics | Particle statistics |

||||||||||||||||||||||||

| This article is licensed under the GNU Free Documentation License. It uses material from the Wikipedia article "Fermi–Dirac_statistics". A list of authors is available in Wikipedia. | ||||||||||||||||||||||||

is the number of particles in state i,

is the number of particles in state i,

is the energy of state i,

is the energy of state i,

is the degeneracy of state(density of states) i (the number of states with energy

is the degeneracy of state(density of states) i (the number of states with energy  is the

is the  is used instead, as a low-temperature approximation),

is used instead, as a low-temperature approximation),

is

is  is absolute

is absolute  , the function is called the Fermi function:

, the function is called the Fermi function:

. For example, if our system is some quantum gas in a box, then a state might be a particular single-particle wave function. Recall that, for a grand canonical ensemble in general, the

. For example, if our system is some quantum gas in a box, then a state might be a particular single-particle wave function. Recall that, for a grand canonical ensemble in general, the

when the state is occupied by n particles, and 0 if it is unoccupied. Consider the balance of single-particle states to be the reservoir. Since the system and the reservoir occupy the same physical space, there is clearly exchange of particles between the two (indeed, this is the very phenomenon we are investigating). This is why we use the grand partition function, which, via chemical potential, takes into consideration the flow of particles between a system and its thermal reservoir.

when the state is occupied by n particles, and 0 if it is unoccupied. Consider the balance of single-particle states to be the reservoir. Since the system and the reservoir occupy the same physical space, there is clearly exchange of particles between the two (indeed, this is the very phenomenon we are investigating). This is why we use the grand partition function, which, via chemical potential, takes into consideration the flow of particles between a system and its thermal reservoir.

,

,  , and

, and  ,

,  . The partition function is therefore

. The partition function is therefore

.

.

.

.

.

.

is called the Fermi-Dirac distribution. For a fixed temperature T,

is called the Fermi-Dirac distribution. For a fixed temperature T,  is the probability that a state with energy ε will be occupied by a fermion. Notice

is the probability that a state with energy ε will be occupied by a fermion. Notice

, then we would make the simple modification:

, then we would make the simple modification:

.

.

, that is, the states whose energy is μ will always have equal probability of being occupied or unoccupied.

, that is, the states whose energy is μ will always have equal probability of being occupied or unoccupied.

,

,  .

.

, it is often sufficient to use the approximation

, it is often sufficient to use the approximation  ≈

≈  .

.