Sensor stickers transform the human body into a multi-touch surface

Advertisement

They are similar to ultra-thin patches, their shape can be freely chosen, and they work anywhere on the body. With such sensors on the skin, mobile devices like smartphones and smartwatches can be operated more intuitively and discreetly than ever before. Computer scientists at Saarland University have now developed sensors that even laypeople can produce with a little effort. The special feature: the sensors make it possible, for the first time, to capture touches on the body very precisesly, even from multiple fingers. The researchers have successfully tested their prototypes in four different applications.

Saarbrücken computer scientists have developed novel skin sensors that allow mobile devices to be controlled from any point on the body.

Universität des Saarlandes

“The human body offers a large surface that is easy to access, even without eye contact,” Jürgen Steimle, a professor of computer science at Saarland University, explains the researchers' interest in this literal human-computer interface. Yet the scientists’ vision had so far not succeeded, because the necessary sensors could not measure touches precisely enough, nor could they capture them from multiple fingertips simultaneously. Jürgen Steimle and his research group have now developed the appropriate special type of sensor.

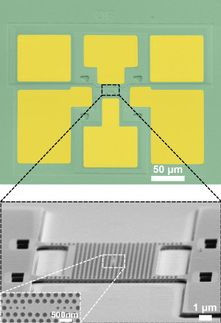

The sensor, named Multi-Touch Skin, looks similar in structure to the touch displays that are well known from smartphones. Two electrode layers, each arrayed in rows and colums, when stacked on top of each other, form a kind of coordinate system, at whose intersections the electrical capacitance is constantly measured. This is lowered at the point where fingers touch the sensor, because the fingers conduct electricity and therefore allow the charge to drain away. These changes are captured at each point, and thus touches from multiple fingers can be detected. In order to find the optimal balance between conductivity, mechanical robustness and flexibility, the researchers evaluated different materials. If, for example, silver is chosen as the conductor, PVC plastic for the insulating material between the electrodes, and PET plastic for the substrate, then the sensor can be printed using a household inkjet printer in less than a minute.

“So that we could really use the sensors on all parts of the body, we had to free them from their rectangular shape. That was an important aspect,” explains Aditya Shekhar Nittala, who is doing his doctoral research in Jürgen Steimle's group. The scientists therefore developed software for designers, so that they can create their own desired sensor shape. In the computer program, the designer first draws the outer shape of the sensor, then outlines the area within this outer shape that is to be touch-sensitive. A special algorithm then calculates the layout that will optimally cover this defined area with touch-sensitive electrodes. Finally, the sensor is printed.

The usefulness of this new freedom of form is made particularly clear by one of the four test prototypes, each of which the scientists produced with their novel fabrication methods: Since this sensor is similar in form to an ear, it is placed on the back of a test participant‘s right ear. The participant can swipe upward or downward on it, in order to use it as a volume control. Swiping right or left changes the song being played, while touching with a flat finger stops the song.

For the Saarbrücken scientists, Multi-Touch Skin is further proof that research into on-skin interfaces is worthwhile. In the future, they want to focus on providing even more advanced sensor design programs, and to develop sensors that capture multiple sensory modalities. Their work on Multi-Touch Skin was financed through the Starting Grant “Interactive Skin” from the European Research Council (ERC).

The sensor now being presented was developed by Jürgen Steimle together with Aditya Shekhar Nittala, Anusha Withana, and Narjes Pourjafaraian, all members of Steimle's research group. At the international conference “CHI Human Factors in Computing Systems” which took place on the 26th of April in Montreal, Canada, the Saarbrücken researchers presented their methods, which for the first time allow interaction designers to design and produce skin-like multi-touch sensors for the body.

Other news from the department science

These products might interest you

Most read news

More news from our other portals

See the theme worlds for related content

Topic world Sensor technology

Sensor technology has revolutionized the chemical industry by providing accurate, timely and reliable data across a wide range of processes. From monitoring critical parameters in production lines to early detection of potential malfunctions or hazards, sensors are the silent sentinels that ensure quality, efficiency and safety.

Topic world Sensor technology

Sensor technology has revolutionized the chemical industry by providing accurate, timely and reliable data across a wide range of processes. From monitoring critical parameters in production lines to early detection of potential malfunctions or hazards, sensors are the silent sentinels that ensure quality, efficiency and safety.