New procedures to authenticate foods as a step towards standardisation

Advertisement

Whether it is olive oil from Apulia, Pata Negra ham from Spain or 12-year-old single-malt whisky from the Scottish Orkney Islands - all these products are characterised by their quality and their high price. These properties also make a number of other foodstuffs including fish, honey and meat attractive for adulterations. While it is true that food and ingredients must be traceable documented, the relevant papers themselves may be forged. "Given the worldwide trade with high-quality foods, we need new and unambiguous chemical and analytical methods with which we can detect both known and new adulterations in a way that is recognised by the courts", BfR Vice President Professor Dr. Reiner Wittkowski explained on the occasion of the international symposium "Standardisation of non-targeted analytical methods for food authenticity testing" which took place at the Federal Institute for Risk Assessment (BfR). At the symposium, about 100 scientists from Germany, Europe, North America, Africa, Asia and New Zealand discussed scientific challenges posed by the standardisation and validation of new methods and procedures for authenticity testing.

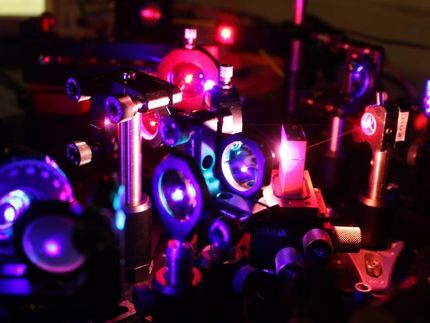

At the heart of the discussions are non-targeted methods for detecting food adulterations and origin. Non-targeted means that testing is not for a specific type of adulteration but rather for deviations of any type. Non-targeted procedures consist of physical and chemical analytical methods which are combined with statistical procedures and databases. By capturing the natural variation of the composition on the basis of an analysis of genuine samples of a specific food, a reference database is built up containing the chemical fingerprints of the products. Through comparison with the authentic spectra of the expected product, it is possible to identify numerous deviations in products, for example in case of deliberate adulteration.

Preconditions for the routine application of these new and non-targeted procedures are the validity of the results and their comparability when applied in different laboratories. Here important fundamentals are still missing, notably standardised and validated analytical methods including the necessary statistical interpretation and databases that are jointly accessible and usable.

On the one hand, the theme of the symposium was therefore the development of strategies for the standardisation of analytical methods and statistical models for interpreting the analytical data. A second focus was the establishment and management of jointly used databases with the goal of channelling relevant information in suitable formats, thereby enabling cooperative use and processing by different laboratories worldwide. Thirdly, the discussion centred around required measures for quality assurance both of the analytical methods and the data interpretation as well as data storage and administration in the databases.